Error output is available in most of the Data Flow Component (DFC). We have to configure this output pipeline to capture or to move the error data from the normal data flow to avoid unnecessary package failure.

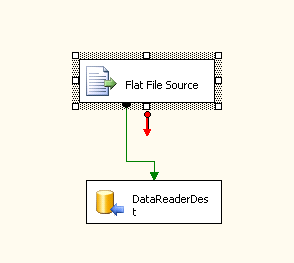

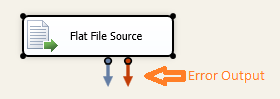

Error output pipeline is marked in red color in Flat File Source component.

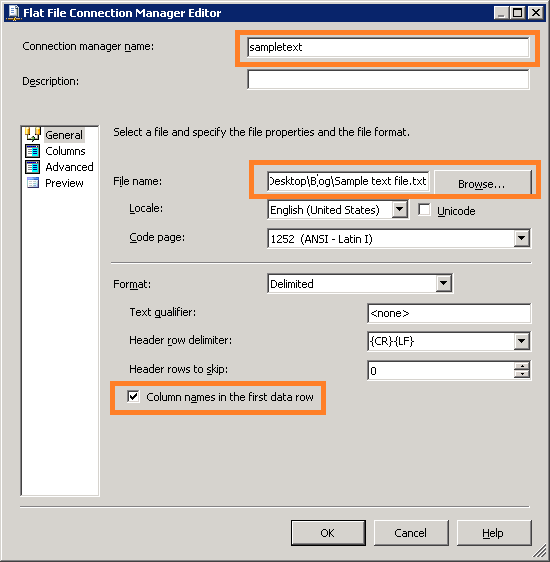

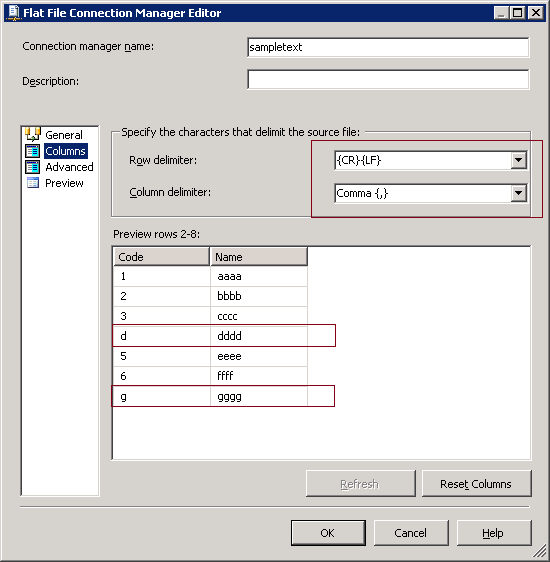

I have comma-separated value in a flat file with two columns (code, Name). Code is an integer value and name is a varchar(50) data type configured in the flat file connection manager. But, I need my package execution uninterrupted. Because, I know some the codes in the flat files are characters. So, flat file reader component will fail reading the character value. But, I want to redirect the error data to separate table.

Implementation

Step 1: Create a new package and add data flow task.

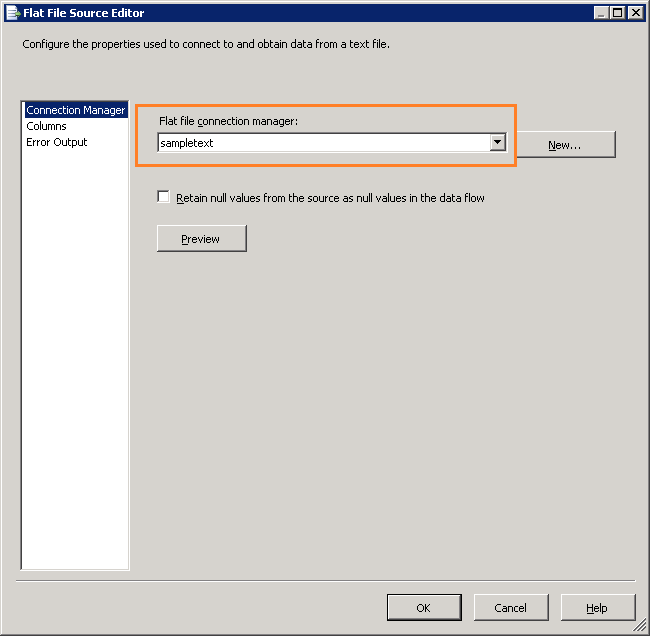

Step 2: Go to Data Flow Task and add flat file reader component and configure the task.

Create flat file connection

Step 3: Connect DFT source output (green pipeline) to destination task. In this demo, I am using Data Reader Destination task. Valid output will go to this destination task.

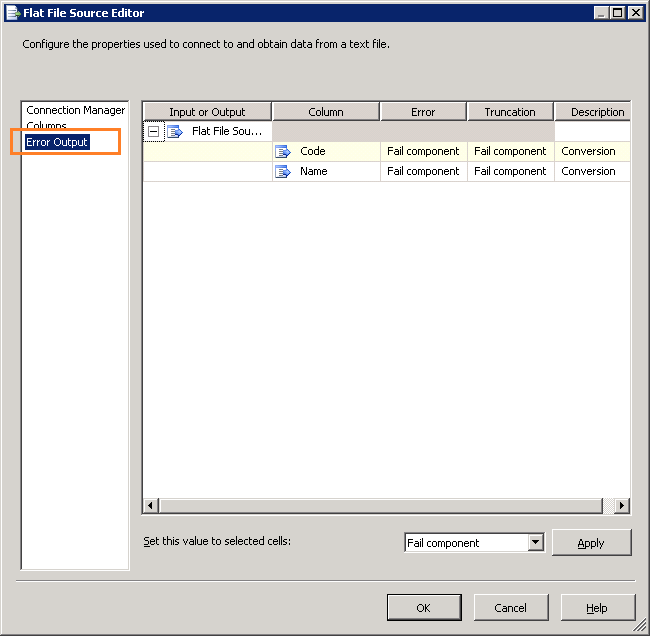

Step 4: Configure Error output in Flat File Source task. Go to task Edit window and select error tab.

Step 4: Configure Error output in Flat File Source task. Go to task Edit window and select error tab.

Now, I am going to configure error output for the column “Code”. But, we can also set to all columns.

Now, I am going to configure error output for the column “Code”. But, we can also set to all columns.

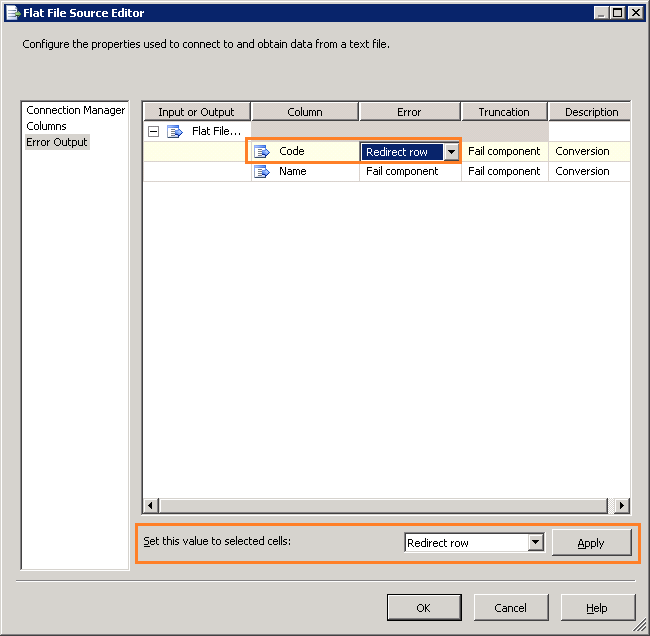

I am redirecting the error rows to the error output of the Flat File source component.

We have done with error output configuration. Now, create a table to store the error data for further analysis.

We have done with error output configuration. Now, create a table to store the error data for further analysis.

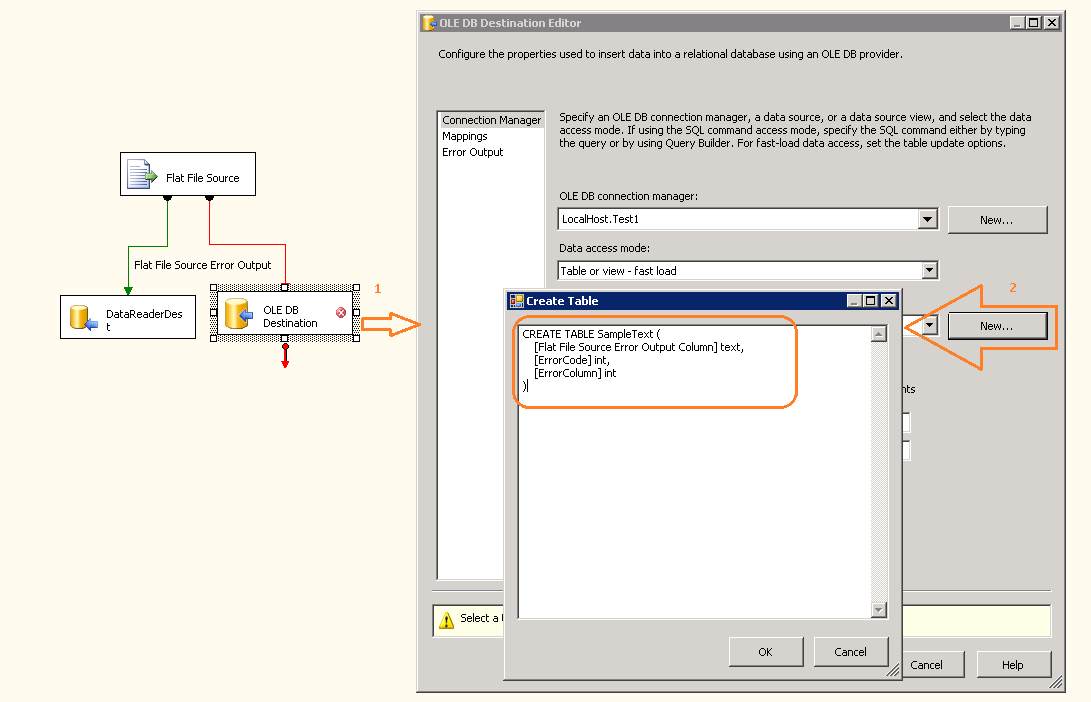

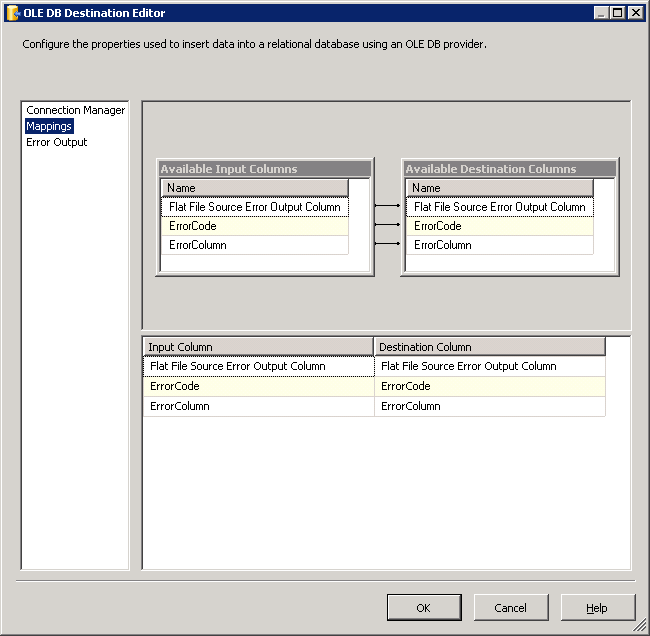

Step 5: Add OLE DB destination task. We are going to store the error data to a SQL table. I take table schema from the OLE DB destination task.

Map the error output columns to the table schema

Map the error output columns to the table schema

Step 6: Execute this package

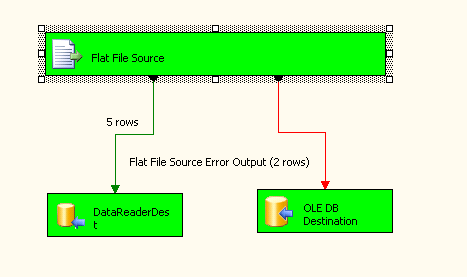

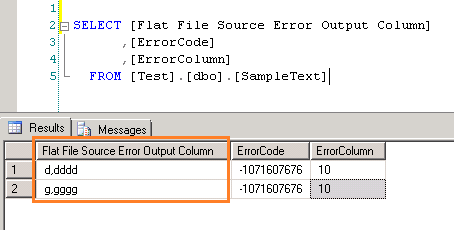

We have got 5 valid rows and 2 error rows. Lets explore the error output table.

We have got 5 valid rows and 2 error rows. Lets explore the error output table.

Conclusion

We have learned how to redirect the error data in data flow without package failure.

Nice one.

Thanks Rohit. Keep coming to this site and give your valuable comments.

Good article. 🙂

Thanks Ram!

It is really awesome solution.

many thanks, Ram